Hi again. Last time I talked about the IScrollInfo interface and how it is implemented in my SurfacePagePanel. Now it’s the time to talk about the ISurfaceScrollInfo! As I said in the last post ISurfaceScrollInfo extends the IScrollInfo interface. ISurfaceScrollInfo has the extra capabilities to react on two basic NUI (Natural User Interface) gestures associated with the Microsoft Surface: Panning and Flicking. Both Panning and Flicking are common NUI gestures but I believe Panning is the most common and natural thing to do.

So what is Panning and Flicking? If you are not interested in reading my explanation you can skip this section! But Panning and Flicking is like moving and object, say an apple. Panning is equivalent to pick up the apple and placing it back gently on another spot, the movement begins when you pick it up and stops when you place it back down again. You as a user are control of it’s movements. Flicking is more like throwing the apple. You are directly not in control of it’s movement. It’s the same with flicking. The moment you release your contact from the Microsoft Surface, the virtual physics kicks in and scrolls the items list until it stops.

ISurfaceScrollInfo extends with three new methods, which helps you control the scrolling when Panning and Flicking:

- ConvertFromViewportUnits(origin, offset) : vector - Converts horizontal and vertical offsets, in viewport units, to device-independent units that are relative to the given origin in viewport units.

- ConvertToViewportUnits(origin, offset) : vector - Converts horizontal and vertical offsets to viewport units, given an offset in device-independent units that are relative to the given origin in viewport units.

- ConvertToViewportUnitsForFlick(origin, offset) : vector - Converts horizontal and vertical offsets to viewport units, given an offset in device-independent units that are relative to the given origin in viewport units.

I’ve inserted the actual documentation summery from the MSDN for each method. What can also be read in the documentation is that the results from the convert to methods are later used when setting the vertical and horizontal offset (using the SetHorizontalOffset and SetVerticalOffset methods from IScrollInfo interface). To be more precise, ConvertToViewportUnits is called all the time during panning and ConvertToViewportUnitsForFlick is called once the panning is complete if needed. The documentation isn’t that clear on when ConvertFromViewportUnits is called by the framework, but it is suppose to reverse the conversion done by the convert to methods.

So how are these methods implemented in SurfacePagePanel? The standard implementation should be to just return the offset argument, as it is done in the Continuous Planning List. But in my case I want to control the panning and flicking. Unlike how the iPhone and Android based phones UI works, I want to constraint the panning movement. The constraint is keeping the currently focused page in the center but you should also be are able to peek at the next item at each side of the page. This constraint I have in the ConvertToViewportUnits method:

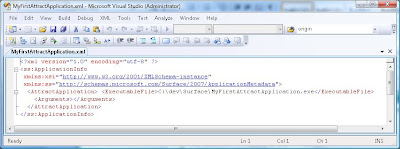

236 public Vector ConvertToViewportUnits(Point origin, Vector offset)

237 {

238 if (_isMoving || !_panningOrigin.HasValue)

239 {

240 return new Vector(0.0, 0.0);

241 }

242

243 var absOffset = Math.Abs(GetScrollOwnerElasticityLength());

244 var direction = offset.X / absOffset;

245 const int scaleFactor = 4; // trail and error generated factor for better user experience.

246 var offsetChoice = Math.Min(absOffset, Math.Log10(absOffset) * scaleFactor);

247

248 return new Vector(offsetChoice * direction, offset.Y);

249 }

The important code here is that I’m using the logarithmic calculations to keep the constraint as it caps horizontal offset. This is an example how you can alter the panning.

If we continue with ConvertToViewportUnitsForFlick you will see that it is not as exciting as ConvertToViewportUnits :

278 public Vector ConvertToViewportUnitsForFlick(Point origin, Vector offset)

279 {

280 _hasFlicked = true;

281 return new Vector(0.0, 0.0);

282 }

Here I return an empty vector and there is a reason for it, because it prevents the ScrollViewer to continue scrolling when flicking. I use flicking as one of the methods to indicate to switch page, so I need to control the scrolling myself. In my next and last post of this blog series I will talk about how I finalized my SurfacePagePanel.

Oh, by the way. Remember that the arguments to ConvertToViewportUnitsForFlick are based on the result from ConvertToViewportUnits. In my solution I got a nasty little side effect. The offset argument to ConvertToViewportUnitsForFlick can be used to determine the direction of the flick, but due to my calculation in ConvertToViewportUnits the flick direction was occasionally reversed. Meaning when the user flick to the left, the offset indicates right. I can’t explain why and how it occasionally was reversed, but it did happen.

So what did I do the implementation of ConvertFromViewportUnits? Well, I used the standard implementation and returned the offset argument. As I don’t see any negative side effects in doing that I leave it with that. Secondly I not really sure how I should properly implement it in my case. If you know more about ConvertFromViewportUnits and want to share it with me, feel free to send me an email explaining it! To prevent any spam mails, my email is: first name dot surname at Connecta dot se. My first name and surname is shown as the author of this post.

With this, I have gone through the methods in ISurfaceScrollInfo interface. But I feel like writing another blog post to wrap things up with my SurfacePagePanel. But this post marks the ending of implementing ISurfaceScrollInfo. Stay tuned to the epilogue of ISurfaceScrollInfo and You series.